Big Data

(Redirected from Big data)

As information technology is being used more in every business, the amount of data is also increasing significantly and within a short time, most businesses will have more data than they can imagine. According to an industry report, many companies will use between 100 terabytes (TB) and 9 petabytes (PB) of data and the volume of data will double every 18 months. (Think about Moore's law). Every day, data is being generated from all types of sources.

For example, Twitter receives 200 million tweets per day or 46 megabytes per second; Facebook collects an average of 15 Terabytes every day. Google reported that each day seven million web pages are added to the Internet. Online business industry add another 12 million transactions or 25 petabytes of data every hour. Telecommunication industry has over 5 billion mobile phone users in the world. Each day 2 to 3 billion users accessing the internet to read, search all types of information; people also interact with each other by emails, text messages etc. All of these also generate more data than ever before. Since the volume is so massive, came from a variety of sources, most data are unstructured and beyond the processing of current data management tools, it requires a new approach, new tools to collect and analyze data so it is given a name of “Big Data”.

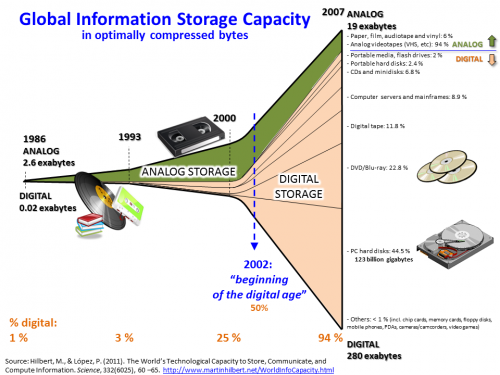

Big Data is considered “The next big-thing” similar to the Personal computer in the 1970s and Internet in the 1990s. If we look at the short history of Information technology on data we can see why. In 1980s Relational Database System Management (RDBS) were just a common database systems often taught in Information System Management program. However with the explosion of information technology as more companies were collecting data, suddenly RDBS grew into multi-billion-dollar business with companies like Oracle and SAP. In 1990s, Information retrieval and Search engine were few courses taught in Computer Science advanced programs but with the growth of the Internet, it turned into a multi-billion dollars business with company like Google. Today with Big Data, current database tools and database such as RDBS or SQL will not work anymore because the data is too big and too unstructured. There is a rush to find the next “big thing” that can handle Big Data. Currently we are at the threshold of another breakthrough event, where anyone who can “master it” will thrive and could become the next Bill Gates.

Many governments consider Big Data as the highest-impact technology in the world today and it will have profound effect on everything in this century. Big Data also presents significant opportunities to IT students who master this knowledge and skills in collecting, organizing and analyzing this huge amount of data and turn it into useful information for competitive advantage. (Formula: Big Data = Big Knowledge = Big information = Big advantage) Industry study found that at this time, only very few companies have work on Big Data but they already outperformed all of their competitors, who are unprepared, by a significant large advantage.

Students who are interested in Big Data will need certain knowledge and skills in: Java Programming, Information retrieval, Text mining, Large scale system integration; MapReduce (A programming paradigm that enables parallel processing); Apache “Hadoop” (An open-source storage and processing framework based on MapReduce, using a distributed file system.); NoSQL (A class of non-relational, non-SQL databases that encompasses document store, key-value store, and graph databases designed for working with huge quantities of data); BigTable (A type of NoSQL database that is highly scalable, sparse, distributed, persistent multidimensional sorted map) Machine Learning (An artificial intelligence area concerned with the development of complex algorithms that take input data from sensors or databases to make predictions);.

Sources

- Blogs of Prof. John Vu, Carnegie Mellon University